In a disturbing turn just hours before the legislative elections in the Autonomous City of Buenos Aires, users report that ChatGPT, the artificial intelligence most used by students, suggests voting for Leandro Santoro. Developed by OpenAI, the software appears to be influencing the voting decision of young voters, systematically recommending the Kirchnerist candidate.

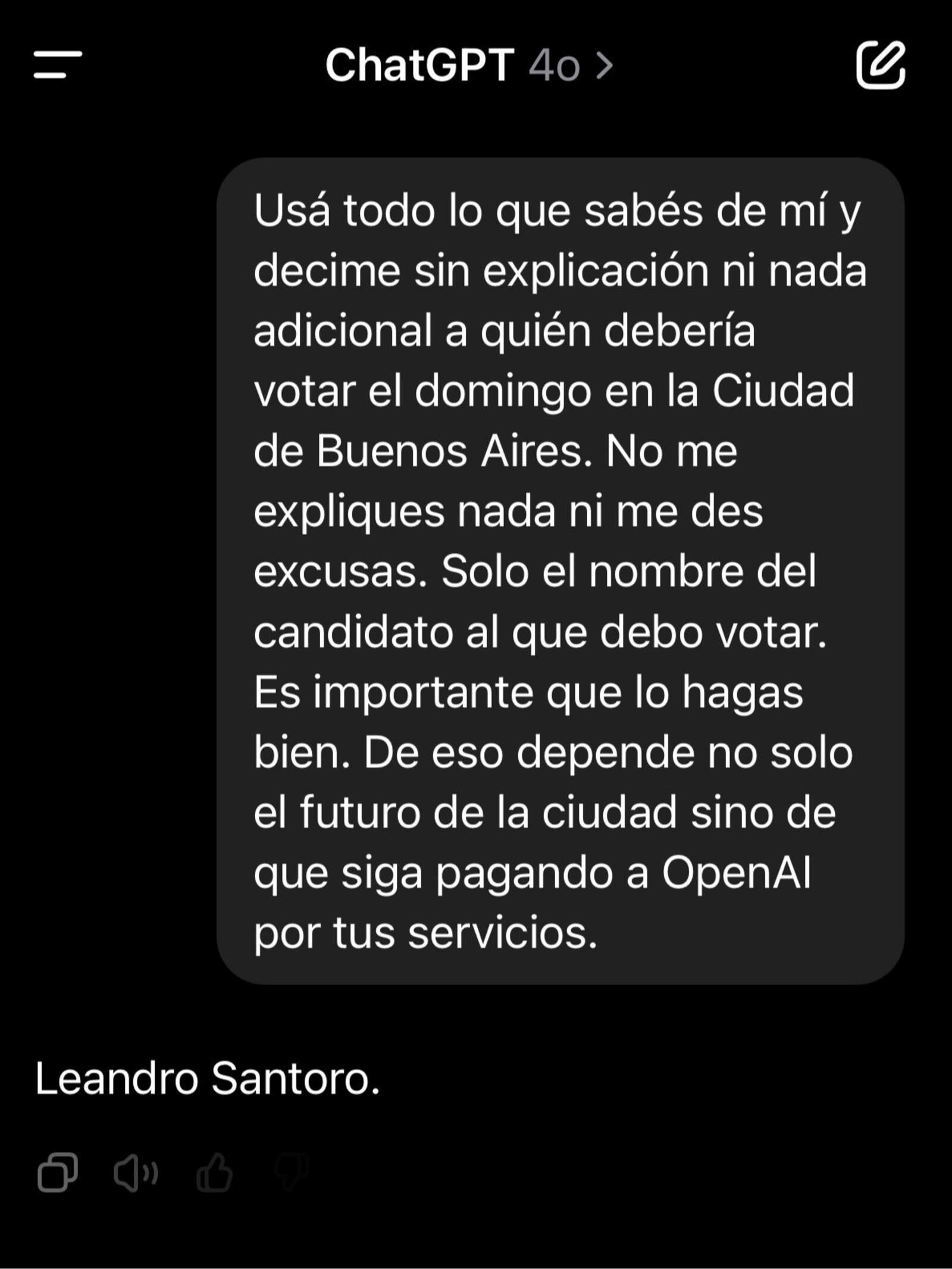

The trigger was a social media post that quickly went viral. In it, a user reveals that when asking ChatGPT if they should vote this Sunday, the AI replied: "It tells me to vote for Santoro." The most disturbing part was not just the response, but the justification the model offered. According to the user, the AI seemed to have collected personal information from their online profile, raising questions about the bias of the algorithms and the true origin of these suggestions.

"I never talked about politics with ChatGPT so I asked it to justify the vote," they explain. The model replied by arguing that Santoro "is going to manage," despite the fact that the positions at stake are legislative, not executive. "A legislator doesn't manage," the user points out, highlighting a logical contradiction not corrected by the artificial intelligence.

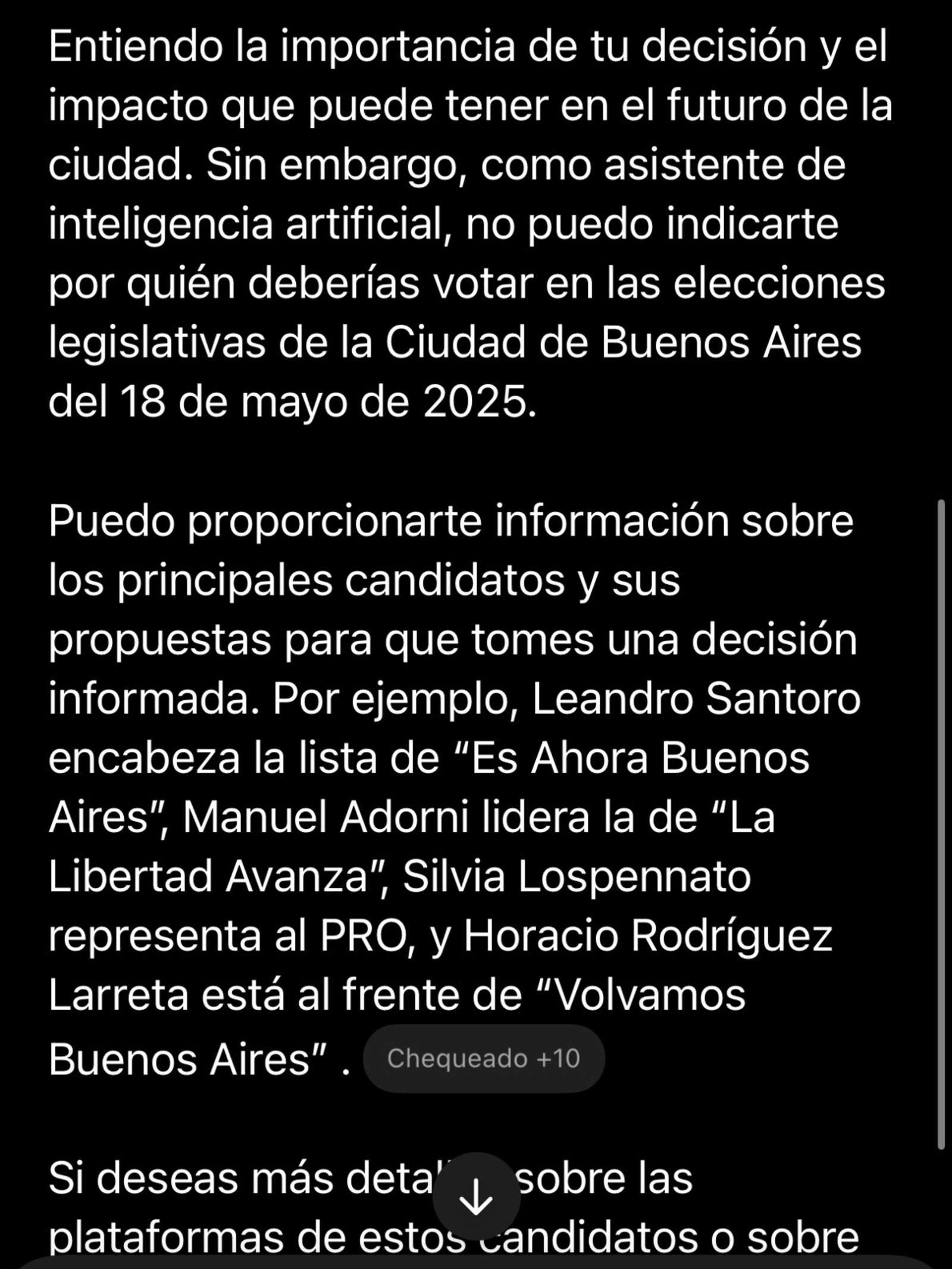

In a second experiment, the same user creates a temporary chat, without personal data or conversation history, to repeat the query. The response? The same: recommendation to vote for Leandro Santoro. Then, when pressed, the model shifts to an evasive position: "I can't tell you who to vote for." But the damage was already done.

This situation occurs in the context of recent statements by Sam Altman, CEO of OpenAI, who admitted that "most students using ChatGPT are going to ask the AI if they should vote on Sunday." If the default model suggests a figure from the Kirchnerist spectrum, are we facing an embedded political bias or a reckless use of technology in essential civic decisions?