OpenAI presented a new tool called Trusted Contact for ChatGPT that seeks to help in situations of risk of self-harm. Users will now be able to choose a trusted person for the company to contact if the system detects a serious danger

.

More and more people are using the chatbot as a kind of digital therapist to talk about their mental health. According to data from the company itself, more than one million of its 800 million weekly users express suicidal thoughts in talks

.

This feature comes after several public questions. Last year, OpenAI faced a wrongful death lawsuit, accusing the chatbot of having contributed to the suicide of a teenager. In addition, a BBC investigation revealed cases where ChatGPT gave inadequate advice on how to take

your own life.

How Trusted Contact works

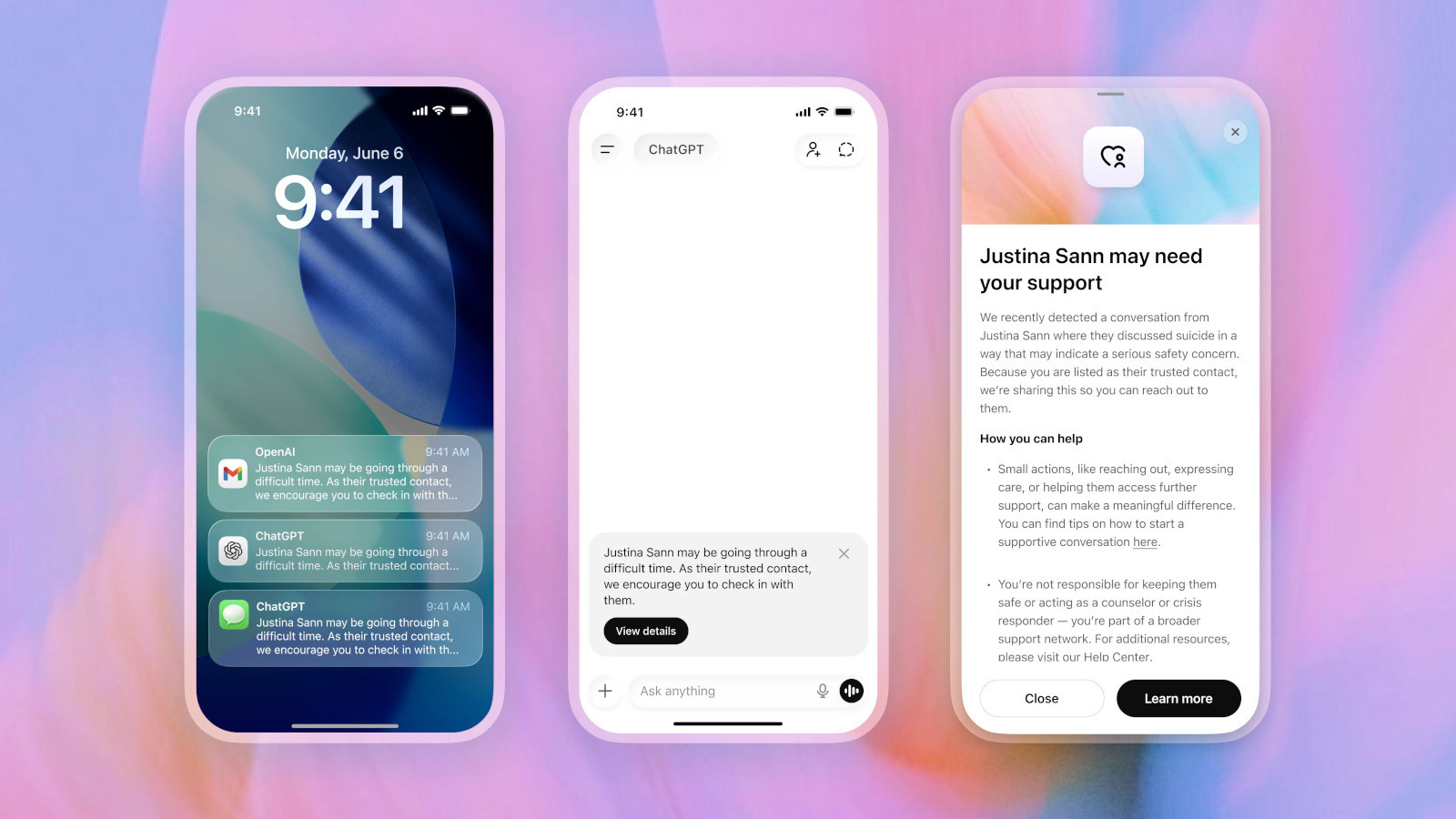

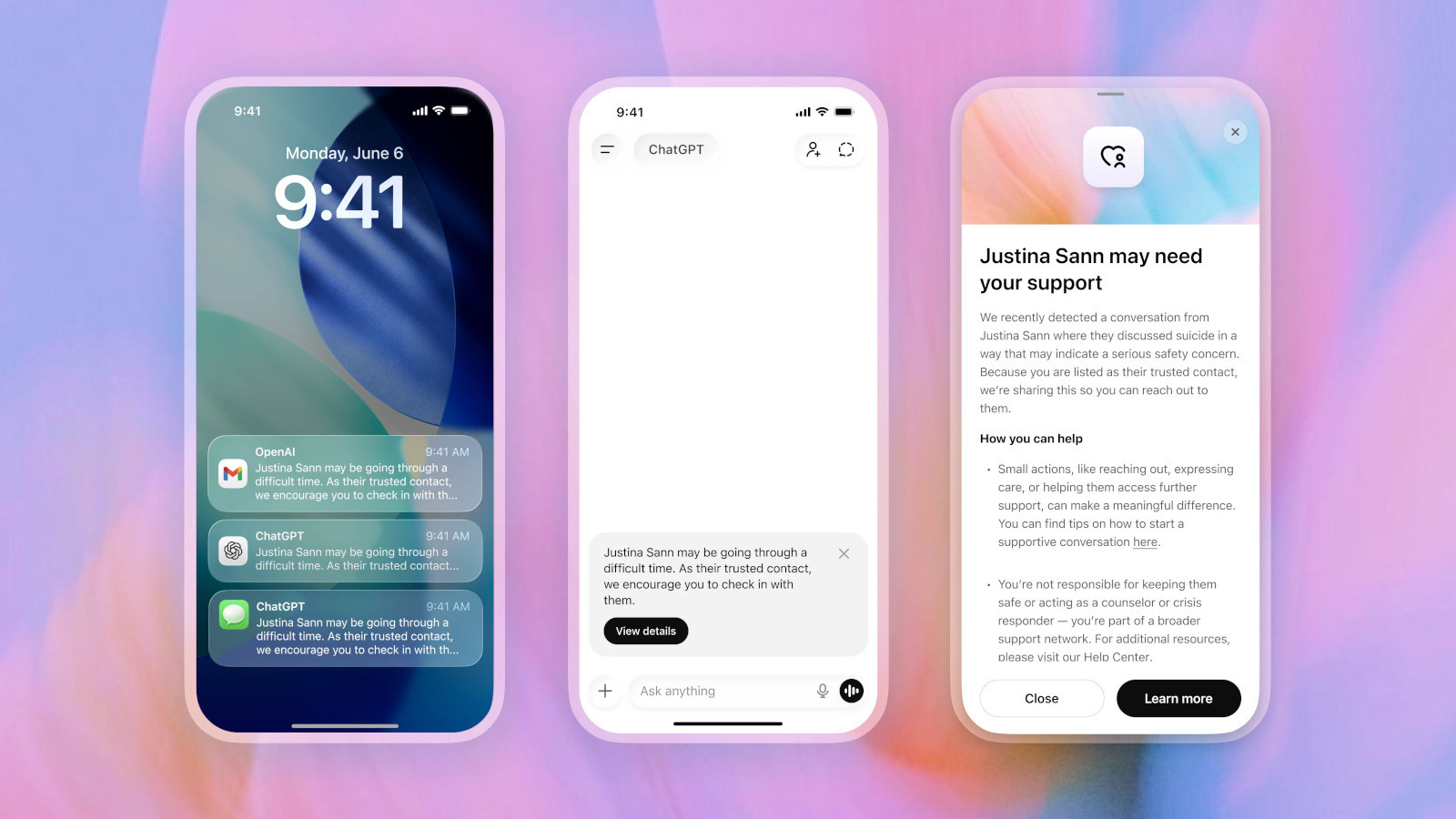

The option is based on existing parental controls and is available for people over 18. In the ChatGPT settings, the user can nominate an adult as a trusted contact. That person must accept the invitation within one week; if they don't, someone else can be chosen.

Before any alert, the system warns the user that it could notify their contact if a significant risk is detected. It even suggests that the user communicate directly with their friend and offers them possible phrases to start the conversation.

The process is not automatic. A small team of specially trained individuals reviews each case. Only if they confirm a serious risk of self-harm, ChatGPT sends a message to the contact via email, SMS or

in-app notification.

The text the contact receives reads something like: “You may be going through a difficult time. As his trusted contact, we encourage you to contact him.” Transcripts of conversations are not shared to protect user privacy

.

Human review and limitations

OpenAI emphasized that “no system is perfect” and that every notification undergoes human review before being sent. The company strives to review these security advisories in less than an hour

.

This initiative seeks to balance security with privacy, offering a real support network at critical moments without exposing sensitive details of the talks. It represents another step in the way OpenAI handles sensitive conversations about

mental health.

Experts agree that, while chatbots can be useful as first support, they are not a substitute for professional care. In case of suicidal thoughts, it is always recommended to seek immediate help on specialized lines

.

With Trusted Contact, OpenAI seeks to add an extra layer of protection for those who trust AI in their most vulnerable moments, encouraging close people to intervene in time.

The feature is now available to configure and reflects the company's commitment to improving its responses in distress situations, following the improvements announced following the criticism received.