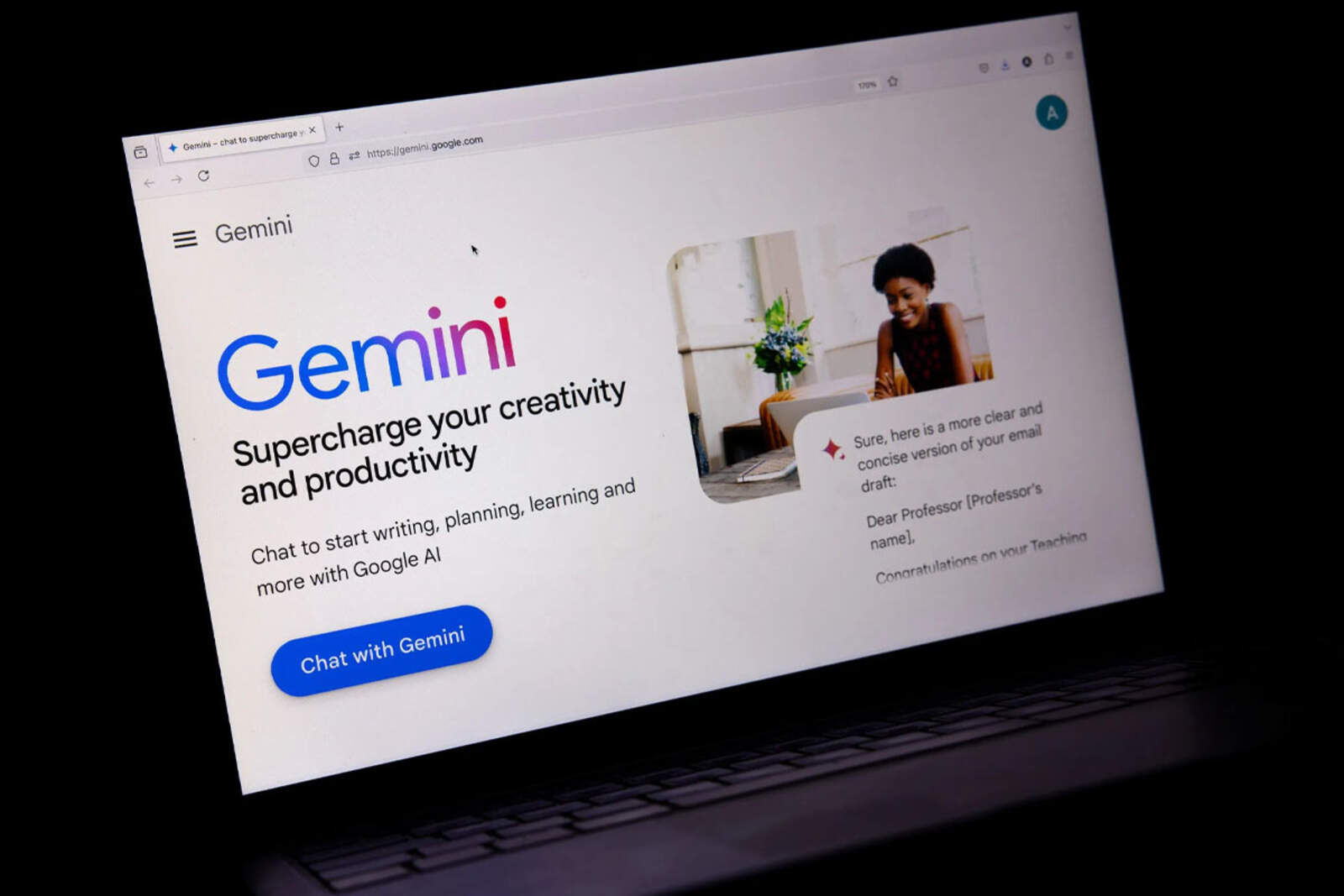

Google introduced Gemini 2.5, an improved version of its artificial intelligence model capable of interacting with digital interfaces like a human. Thanks to its visual reasoning and iterative execution, the system can operate platforms, complete forms, and organize tasks autonomously.

The model is already available for developers in public preview through the Gemini API in Google AI Studio and Vertex AI.

A model that acts and reasons like a human user

Unlike traditional systems, which rely on structured APIs, Gemini 2.5 can manipulate graphical interfaces directly. This includes typing, clicking, scrolling, using dropdown menus, or navigating between pages, even within platforms that require login.

- Completes and submits online forms.

- Navigates websites or collaborative platforms.

- Sorts, moves, and organizes items according to user instructions.

For example, the system can arrange notes on a digital task board by following precise instructions.

How the Gemini 2.5 model works

The model operates through the computer_use tool included in the Gemini API. It works in an iterative cycle: the user sends a request along with a screenshot and the history of recent actions. Gemini analyzes that data, generates a response, and executes an action (such as clicking or typing).